Appearance

嵌入模型与向量数据库

💡 学习目标

- 理解向量表征

- 向量与文本向量

- 向量间的相似度计算

- Embedding Models嵌入模型原理及选择

- 向量数据库概述及核心原理

- Chroma向量数据库核心操作

- Milvus向量数据库扩展学习

1. 向量表征(Vector Representation)

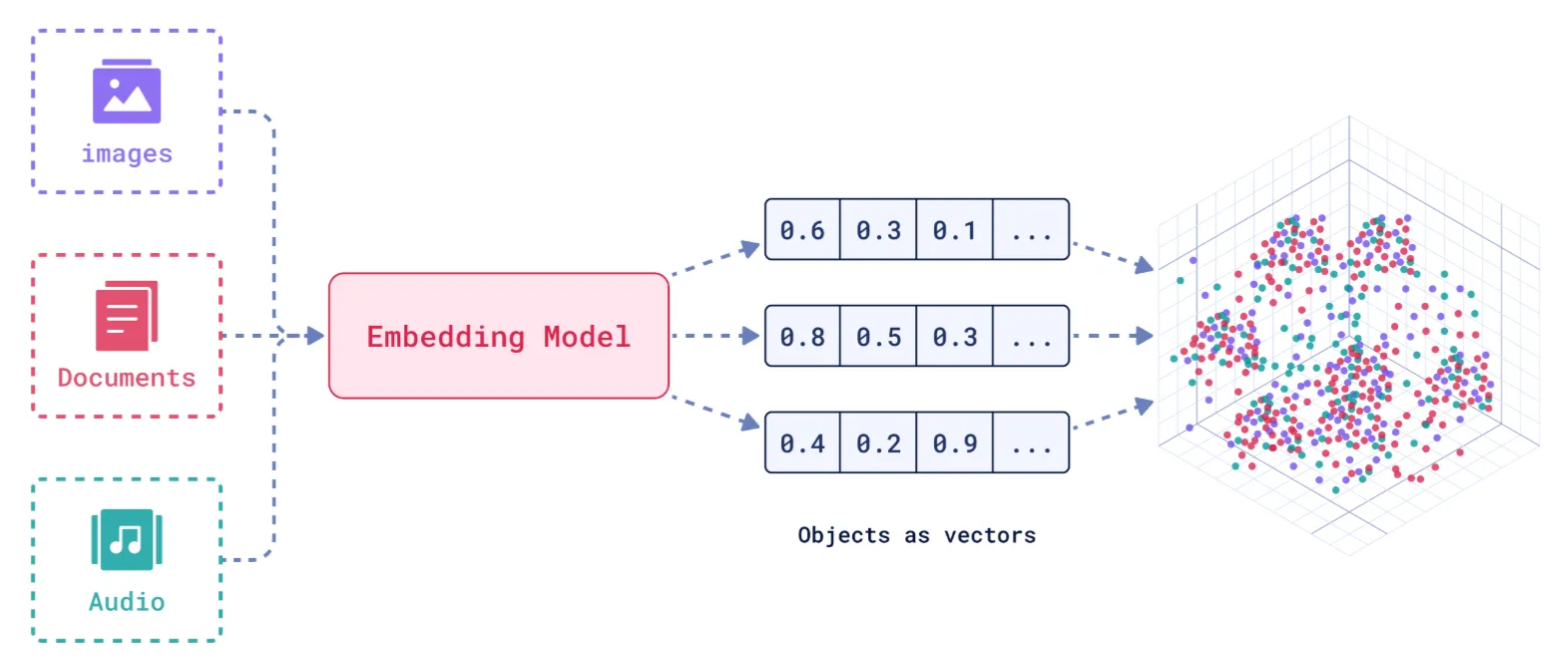

在人工智能领域,向量表征(Vector Representation)是核心概念之一。通过将文本、图像、声音、行为甚至复杂关系转化为高维向量(Embedding),AI系统能够以数学方式理解和处理现实世界中的复杂信息。这种表征方式为机器学习模型提供了统一的“语言”。

1.1. 向量表征的本质

向量表征的本质:万物皆可数学化

(1)核心思想

降维抽象:将复杂对象(如一段文字、一张图片)映射到低维稠密向量空间,保留关键语义或特征。

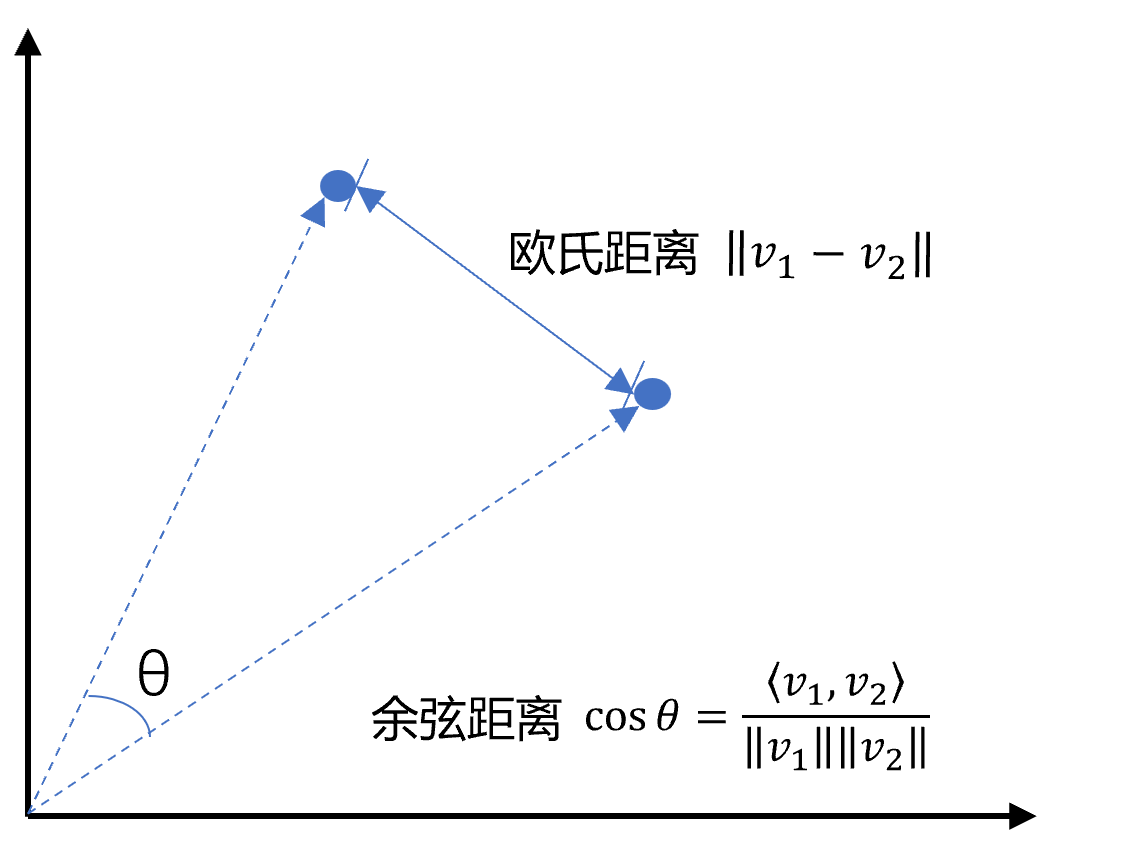

相似性度量:向量空间中的距离(如余弦相似度)反映对象之间的语义关联(如“猫”和“狗”的向量距离小于“猫”和“汽车”)。

(2)数学意义

特征工程自动化:传统机器学习依赖人工设计特征(如文本的TF-IDF),而向量表征通过深度学习自动提取高阶抽象特征。

跨模态统一:文本、图像、视频等不同模态数据可映射到同一向量空间,实现跨模态检索(如“用文字搜图片”)。

1.2. 向量表征的典型应用场景

(1)自然语言处理(NLP)

词向量(Word2Vec、GloVe):单词映射为向量,解决“一词多义”问题(如“苹果”在“水果”和“公司”上下文中的不同向量)。

句向量(BERT、Sentence-BERT):整句语义编码,用于文本相似度计算、聚类(如客服问答匹配)。

知识图谱嵌入(TransE、RotatE):将实体和关系表示为向量,支持推理(如预测“巴黎-首都-法国”的三元组可信度)。

(2)计算机视觉(CV)

图像特征向量(CNN特征):ResNet、ViT等模型提取图像语义,用于以图搜图、图像分类。

跨模态对齐(CLIP):将图像和文本映射到同一空间,实现“描述文字生成图片”或反向搜索。

(3)推荐系统

- 用户/物品向量:用户行为序列(点击、购买)编码为用户向量,商品属性编码为物品向量,通过向量内积预测兴趣匹配度(如YouTube推荐算法)。

(4)复杂系统建模

图神经网络(GNN):社交网络中的用户、商品、交互事件均表示为向量,捕捉网络结构信息(如社区发现、欺诈检测)。

时间序列向量化:将股票价格、传感器数据编码为向量,预测未来趋势(如LSTM、Transformer编码)。

1.3. 向量表征的技术实现

(1)经典方法

无监督学习:Word2Vec通过上下文预测(Skip-Gram)或矩阵分解(GloVe)生成词向量。

有监督学习:微调预训练模型(如BERT)适应具体任务,提取任务相关向量。

(2)前沿方向

对比学习(Contrastive Learning):通过构造正负样本对(如“同一图片的不同裁剪”为正样本),拉近正样本向量距离,推开负样本(SimCLR、MoCo)。

多模态融合:将文本、图像、语音等多模态信息融合为统一向量(如Google的MUM模型)。

动态向量:根据上下文动态调整向量(如Transformer的注意力机制),解决静态词向量无法适应多义性的问题

2. 什么是向量

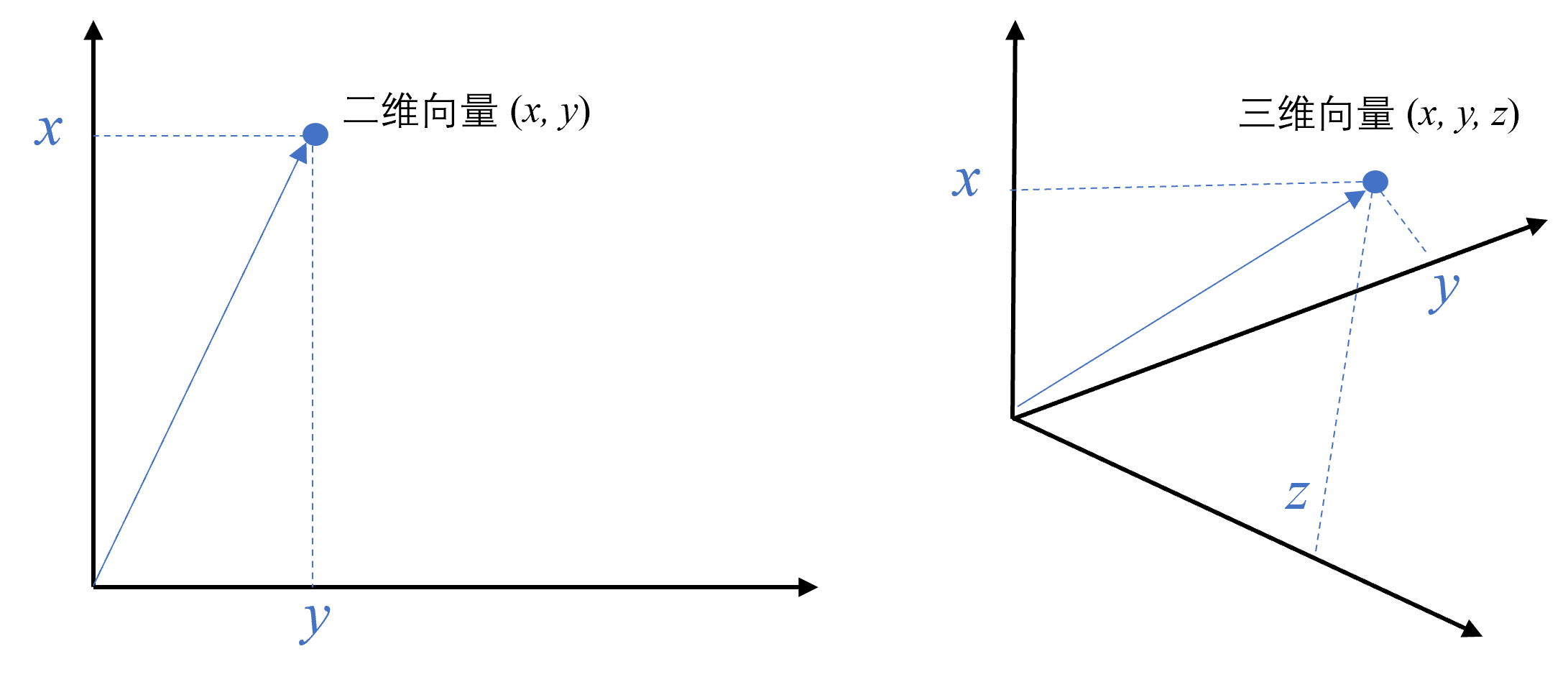

向量是一种有大小和方向的数学对象。它可以表示为从一个点到另一个点的有向线段。例如,二维空间中的向量可以表示为

以此类推,我可以用一组坐标

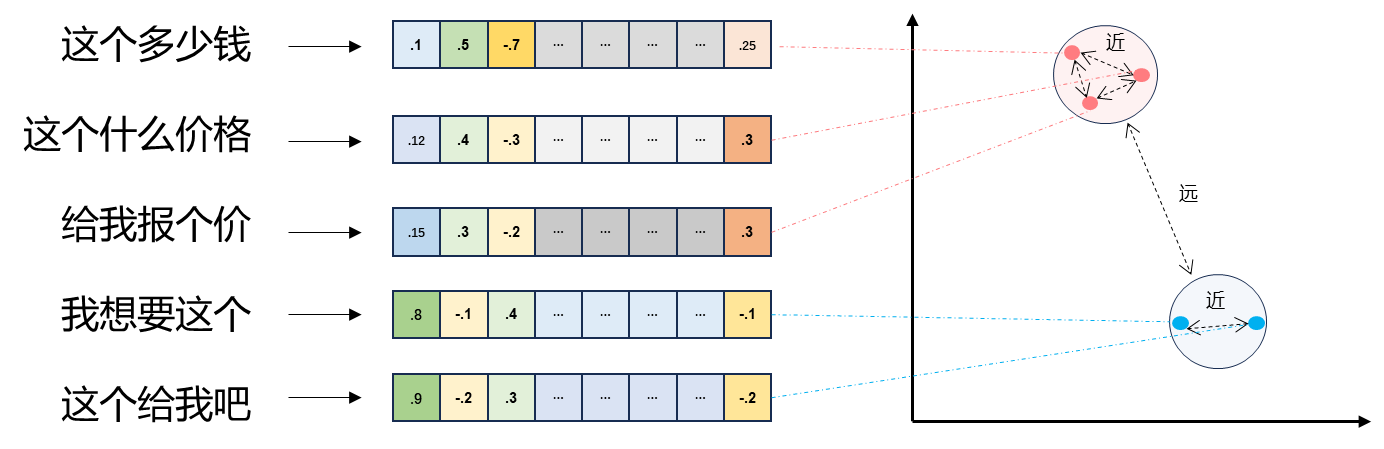

2.1 文本向量(Text Embeddings)

- 将文本转成一组

- 向量之间可以计算距离,距离远近对应语义相似度大小

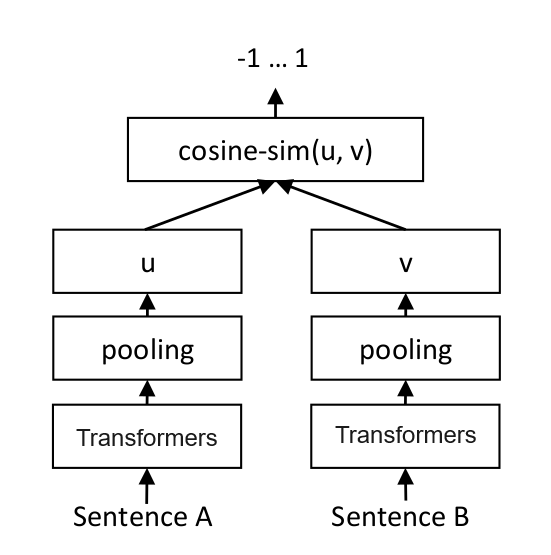

2.2 文本向量是怎么得到的(选)

- 构建相关(正例)与不相关(负例)的句子对样本

- 训练双塔式模型,让正例间的距离小,负例间的距离大

例如:

扩展阅读:https://www.sbert.net

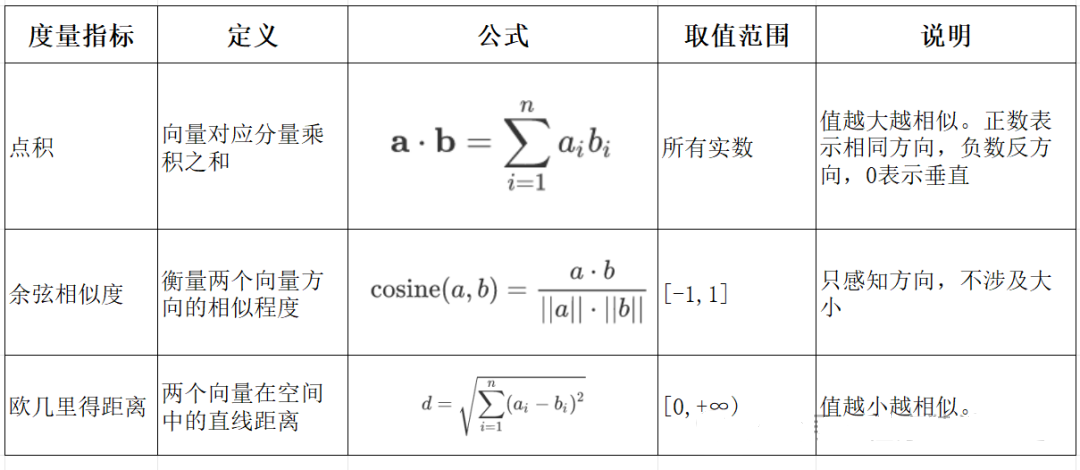

2.3 向量间的相似度计算

余弦相似度是通过计算两个向量夹角的余弦值来衡量相似性,等于两个向量的点积除以两个向量长度的乘积。

python

# !pip install --upgrade numpy

# !pip install --upgrade openai1

2

2

python

import os

from openai import OpenAI

# 需要在系统环境变量中配置好相应的key

# OpenAI key(1 代理方式 2 官网注册购买)

# 阿里百炼配置

# DASHSCOPE_API_KEY sk-xxx

# DASHSCOPE_BASE_URL https://dashscope.aliyuncs.com/compatible-mode/v1

client = OpenAI(

api_key=os.getenv("DASHSCOPE_API_KEY"), # 如果您没有配置环境变量,请在此处用您的API Key进行替换

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1" # 百炼服务的base_url

)1

2

3

4

5

6

7

8

9

10

11

12

13

2

3

4

5

6

7

8

9

10

11

12

13

python

import numpy as np

from numpy import dot

from numpy.linalg import norm1

2

3

2

3

python

def cos_sim(a, b):

'''余弦距离 -- 越大越相似'''

return dot(a, b)/(norm(a)*norm(b))

def l2(a, b):

'''欧氏距离 -- 越小越相似'''

x = np.asarray(a)-np.asarray(b)

return norm(x)1

2

3

4

5

6

7

8

2

3

4

5

6

7

8

python

def get_embeddings(texts, model="text-embedding-v1", dimensions=None):

'''封装 OpenAI 的 Embedding 模型接口'''

if model == "text-embedding-v1":

dimensions = None

if dimensions:

data = client.embeddings.create(

input=texts, model=model, dimensions=dimensions).data

else:

data = client.embeddings.create(input=texts, model=model).data

return [x.embedding for x in data]1

2

3

4

5

6

7

8

9

10

2

3

4

5

6

7

8

9

10

python

test_query = ["聚客AI - 用科技力量,构建智能未来!"]

vec = get_embeddings(test_query)[0]

print(f"Total dimension: {len(vec)}")

print(f"First 10 elements: {vec[:10]}")1

2

3

4

2

3

4

执行结果

python

Total dimension: 1536

First 10 elements: [

1.8666456937789917,

-3.2402632236480713,

1.2597650289535522,

-2.3682942390441895,

2.38277530670166,

-3.507119655609131,

1.461685299873352,

-4.431000709533691,

-2.722412586212158,

1.1500697135925293]1

2

3

4

5

6

7

8

9

10

11

12

13

2

3

4

5

6

7

8

9

10

11

12

13

python

query = "国际争端"

# 且能支持跨语言

# query = "global conflicts"

documents = [

"联合国就苏丹达尔富尔地区大规模暴力事件发出警告",

"土耳其、芬兰、瑞典与北约代表将继续就瑞典“入约”问题进行谈判",

"日本岐阜市陆上自卫队射击场内发生枪击事件 3人受伤",

"国家游泳中心(水立方):恢复游泳、嬉水乐园等水上项目运营",

"我国首次在空间站开展舱外辐射生物学暴露实验",

]

query_vec = get_embeddings([query])[0]

doc_vecs = get_embeddings(documents)

print("Query与自己的余弦距离: {:.2f}".format(cos_sim(query_vec, query_vec)))

print("Query与Documents的余弦距离:")

for vec in doc_vecs:

print(cos_sim(query_vec, vec))

print()

print("Query与自己的欧氏距离: {:.2f}".format(l2(query_vec, query_vec)))

print("Query与Documents的欧氏距离:")

for vec in doc_vecs:

print(l2(query_vec, vec))1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

执行结果

python

Query与自己的余弦距离: 1.00

Query与Documents的余弦距离:

0.33911047648730697

0.2688252041673728

0.07006357800727325

0.10452984187535311

0.12916023809848173

Query与自己的欧氏距离: 0.00

Query与Documents的欧氏距离:

109.51274483356154

109.95511077641505

128.00071081019382

123.82186767906518

134.32520931177121

2

3

4

5

6

7

8

9

10

11

12

13

14

15

2

3

4

5

6

7

8

9

10

11

12

13

14

15

3. Embedding Models 嵌入模型

3.1. 什么是嵌入(Embedding)?

嵌入(Embedding)是指非结构化数据转换为向量的过程,通过神经网络模型或相关大模型,将真实世界的离散数据投影到高维数据空间上,根据数据在空间中的不同距离,反映数据在物理世界的相似度。

3.2. 嵌入模型概念及原理

1. 嵌入模型的本质

嵌入模型(Embedding Model)是一种将离散数据(如文本、图像)映射到连续向量空间的技术。通过高维向量表示(如 768 维或 3072 维),模型可捕捉数据的语义信息,使得语义相似的文本在向量空间 中距离更近。例如,“忘记密码”和“账号锁定”会被编码为相近的向量,从而支持语义检索而非仅关键词匹配。

2. 核心作用

语义编码:将文本、图像等转换为向量,保留上下文信息(如 BERT 的 CLS Token 或均值池化。相似度计算:通过余弦相似度、欧氏距离等度量向量关联性,支撑检索增强生成(RAG)、推荐系统等应用。

信息降维:压缩复杂数据为低维稠密向量,提升存储与计算效率。

3. 关键技术原理

上下文依赖:现代模型(如 BGE-M3)动态调整向量,捕捉多义词在不同语境中的含义。

训练方法:对比学习(如 Word2Vec 的 Skip-gram/CBOW)、预训练+微调(如 BERT)。

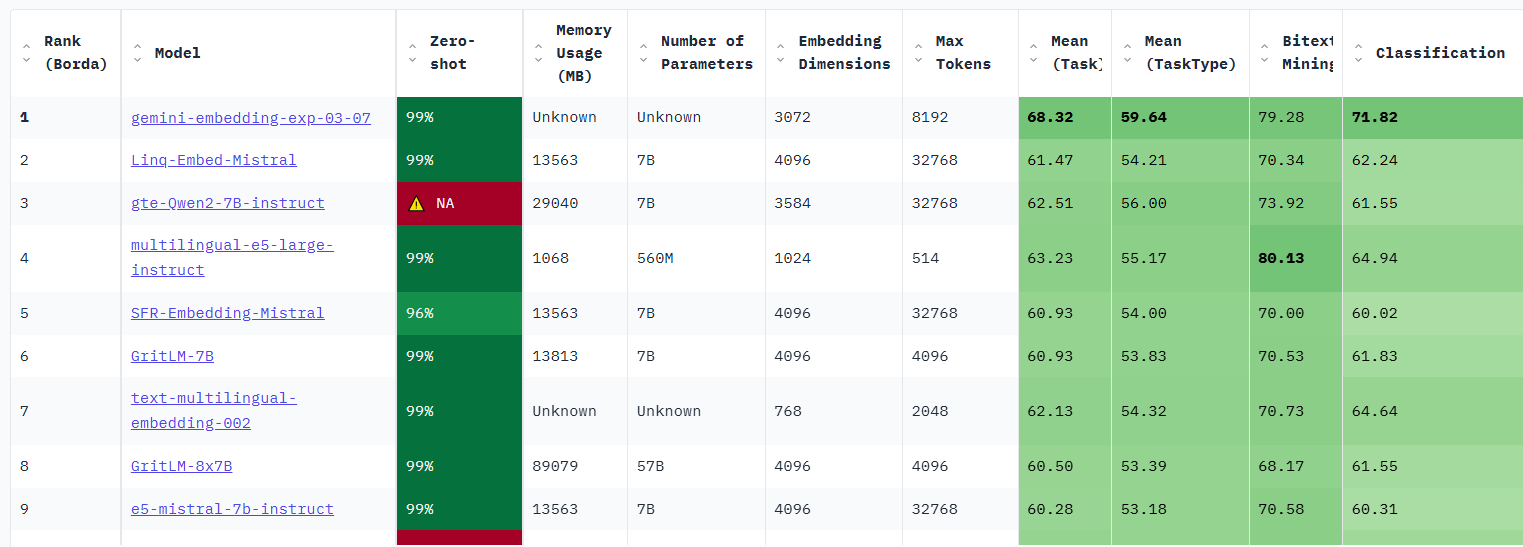

3.3. 主流嵌入模型分类与选型指南

Embedding 模型将文本转换为数值向量,捕捉语义信息,使计算机能够理解和比较内容的"意义"。选择 Embedding 模型的考虑因素:

| 因素 | 说明 |

|---|---|

| 任务性质 | 匹配任务需求(问答、搜索、聚类等) |

| 领域特性 | 通用vs专业领域(医学、法律等) |

| 多语言支持 | 需处理多语言内容时考虑 |

| 维度 | 权衡信息丰富度与计算成本 |

| 许可条款 | 开源vs专有服务 |

| 最大Tokens | 适合的上下文窗口大小 |

最佳实践:为特定应用测试多个 Embedding 模型,评估在实际数据上的性能而非仅依赖通用基准。

1. 通用全能型

- BGE-M3:北京智源研究院开发,支持多语言、混合检索(稠密+稀疏向量),处理 8K 上下文,适合企业级知识库。

- NV-Embed-v2:基于 Mistral-7B,检索精度高(MTEB 得分 62.65),但需较高计算资源。

2. 垂直领域特化型

- 中文场景: BGE-large-zh-v1.5 (合同/政策文件)、 M3E-base (社交媒体分析)。

- 多模态场景: BGE-VL (图文跨模态检索),联合编码 OCR 文本与图像特征。

3. 轻量化部署型

- nomic-embed-text:768 维向量,推理速度比 OpenAI 快 3 倍,适合边缘设备。

- gte-qwen2-1.5b-instruct:1.5B 参数,16GB 显存即可运行,适合初创团队原型验。

选型决策树:

中文为主 → BGE 系列 > M3E;

多语言需求 → BGE-M3 > multilingual-e5;

预算有限 → 开源模型(如 Nomic Embed)

Embddding Leaderboard

https://huggingface.co/spaces/mteb/leaderboard

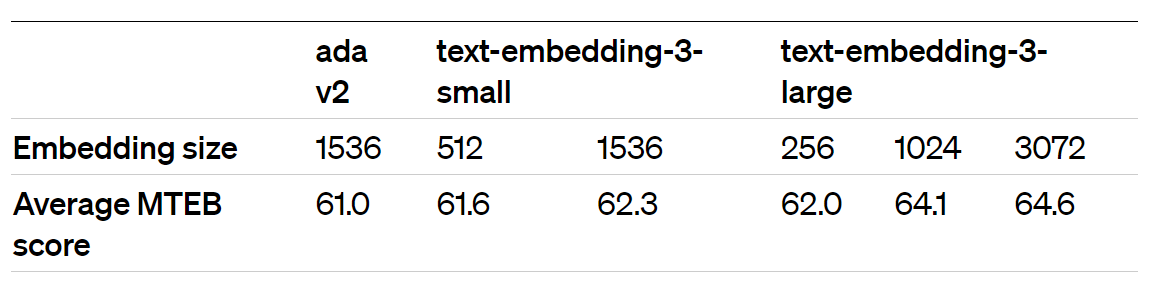

3.4. OpenAI 新发布的两个 Embedding 模型

2024 年 1 月 25 日,OpenAI 新发布了两个 Embedding 模型

- text-embedding-3-large

- text-embedding-3-small

其最大特点是,支持自定义的缩短向量维度,从而在几乎不影响最终效果的情况下降低向量检索与相似度计算的复杂度。

通俗的说:越大越准、越小越快。 官方公布的评测结果:

注:MTEB 是一个大规模多任务的 Embedding 模型公开评测集

3.5. 嵌入模型使用

- 使用 API 调用方式

python

import os

from openai import OpenAI

client = OpenAI(

api_key=os.getenv("DASHSCOPE_API_KEY"), # 如果您没有配置环境变量,请在此处用您的API Key进行替换

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1" # 百炼服务的base_url

)

completion = client.embeddings.create(

model="text-embedding-v3",

input='国际争端',

dimensions=1024,

encoding_format="float"

)

print(completion.model_dump_json())1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

执行结果

python

{"data":[{"embedding":[-0.014563590288162231,0.017968913540244102,-0.03981896489858627,-0.011857990175485611,-0.06482243537902832,-0.012165868654847145,0.024648945778608322,0.05661234259605408,0.007944200187921524,0.030694562941789627,-0.023753300309181213,0.0011679560411721468,-0.04967107996344566,0.013070845045149326,-0.013583976775407791,-0.009628202766180038,0.08904221653938293,0.0010361747117713094,-0.04582726210355759,-0.02222323603928089,-0.05280584096908569,-0.0008262575138360262,-0.033605415374040604,-0.021140996366739273,0.030974451452493668,0.031459592282772064,0.0009405457531102002,-0.008144788444042206,0.008905154652893543,-0.10583559423685074,0.0006256699562072754,0.04425987973809242,0.05672429874539375,-0.0471707321703434,0.001204691594466567,-0.02979891560971737,-0.017035948112607002,-0.023193519562482834,-0.12173333019018173,0.042058080434799194,-0.06187426671385765,0.007846239022910595,0.002484020311385393,-0.038512811064720154,0.04086388647556305,-0.058030448853969574,0.00526658957824111,-0.026234988123178482,-0.006344164721667767,-0.007477717939764261,-0.04732000455260277,-0.014003810472786427,0.0742267295718193,-0.040938522666692734,0.03330686688423157,0.018948527052998543,-0.0077016293071210384,0.030340034514665604,-0.04258054122328758,0.003519612131640315,-0.035564642399549484,-0.012361791916191578,0.00353127415291965,-0.017940925434231758,0.02899656444787979,0.13270500302314758,0.0282501932233572,-0.015337951481342316,0.017717013135552406,-0.05519423261284828,-0.05034281313419342,0.02989221177995205,-0.021775413304567337,-0.04034142568707466,-0.03134763613343239,0.012324472889304161,0.03190741688013077,-0.013154812157154083,0.04586457833647728,0.025339340791106224,0.07919010519981384,0.02951902523636818,-0.014974094927310944,0.05810508504509926,-0.0013796226121485233,0.06896480172872543,-0.0010519184870645404,0.03634833171963692,0.002409383188933134,-0.028474103659391403,-0.014740853570401669,-0.045752622187137604,-0.027727732434868813,-0.031272999942302704,-0.01387319527566433,0.024126484990119934,-0.08657918870449066,0.031963396817445755,0.02054389752447605,-0.019069813191890717,0.042431268841028214,0.032709766179323196,-0.018976517021656036,-0.07135319709777832,0.01723187044262886,0.010271948762238026,0.07523433119058609,0.04444647207856178,0.013789229094982147,0.022577762603759766,0.03692677244544029,-0.00989876314997673,-0.005915000569075346,-0.004713807720690966,-0.011102288030087948,-0.06332968920469284,0.008662584237754345,-0.008060821332037449,0.0027709072455763817,-0.012790955603122711,-0.016074994578957558,0.005121980328112841,0.016084324568510056,-0.017119916155934334,0.00988010410219431,0.009637532755732536,0.06549417227506638,0.0425059050321579,0.02166345715522766,-0.010327926836907864,0.043737418949604034,0.045640669763088226,-0.01868729665875435,-0.0008280068286694586,0.012641681358218193,-0.02022669091820717,-0.028921928256750107,-0.012548385187983513,0.003230392700061202,-0.0410877950489521,0.03666554018855095,0.028753994032740593,-0.019928140565752983,-0.016186950728297234,-0.04944716766476631,-0.034351784735918045,0.06254599988460541,0.010271948762238026,0.04105047881603241,-0.019125791266560555,0.012016594409942627,-0.04694681987166405,0.0387740433216095,0.006899279076606035,0.010561168193817139,-0.0284927636384964,-0.03993092104792595,0.028791312128305435,-0.0012070239754393697,-0.026645492762327194,-0.008037497289478779,-0.003755185753107071,-0.050081584602594376,-0.0007586174760945141,-0.035135477781295776,0.018286122009158134,0.04713341221213341,0.028511423617601395,-0.032205965369939804,-0.03907259181141853,0.037523869425058365,-0.017735673114657402,0.04993230849504471,-0.015263314358890057,-0.019275065511465073,0.03272842615842819,-0.0003320773830637336,0.0060829343274235725,-0.012389780953526497,-0.00761299766600132,-0.04146098345518112,0.04142366349697113,0.03627369552850723,-0.010215971618890762,-0.03002282790839672,0.021308930590748787,-0.05586596950888634,0.010187982581555843,0.004774450324475765,0.09180379658937454,-0.016028346493840218,0.0131921311840415,0.016130972653627396,-0.032205965369939804,-0.0012291818857192993,0.010598487220704556,0.046722907572984695,-0.014582249335944653,-0.003685213392600417,-0.028287511318922043,-0.07008436322212219,0.017866287380456924,0.014050459489226341,0.04030410572886467,-0.02330547571182251,-0.03571391478180885,0.005439188331365585,-0.009833455085754395,-0.004119042307138443,-0.009352978318929672,0.002682275604456663,0.0020711831748485565,0.00707654282450676,0.010383904911577702,-0.05485836789011955,0.022540444508194923,-0.0030554616823792458,-0.011382178403437138,0.010197311639785767,-0.005481171887367964,-0.05067868158221245,0.0254512969404459,0.023660002276301384,0.057470668107271194,0.017035948112607002,-0.03386664390563965,0.07258471101522446,0.032765746116638184,0.008336045779287815,0.056537702679634094,-0.004977370612323284,0.012343132868409157,0.04444647207856178,-0.06519562005996704,0.042841773480176926,-0.0002640000602696091,-0.04414792358875275,0.00005885699647478759,0.03310161456465721,-0.014442305080592632,0.012716318480670452,0.0012058578431606293,0.028268851339817047,-0.0294257290661335,-0.04452110826969147,0.03776644170284271,-0.007515036500990391,-0.02769041433930397,-0.005523155443370342,-0.05082795396447182,0.03067590296268463,-0.010523850098252296,-0.023921232670545578,0.0094602694734931,0.03651626780629158,0.013770569115877151,0.000369396002497524,0.0007020564517006278,-0.008014173246920109,0.0026356272865086794,0.004086388740688562,0.013490679673850536,-0.015076721087098122,0.03606844320893288,-0.007650316227227449,0.004907398018985987,-0.047655873000621796,-0.00311843678355217,0.038363538682460785,0.010094685479998589,-0.015757786110043526,-0.049708396196365356,-0.013472020626068115,0.007221152540296316,-0.005448517855256796,0.006675367709249258,-0.017315838485956192,-0.03265378996729851,0.006992575712502003,0.03718800097703934,0.02563788928091526,-0.018108857795596123,0.025973757728934288,-0.04463306441903114,-0.050454769283533096,-0.012986878864467144,-0.03164618834853172,-0.07247275114059448,0.0017749667167663574,-0.0021120004821568727,0.030507968738675117,0.007202493026852608,0.09411755204200745,0.0005886428407393396,0.01979752629995346,0.026962701231241226,0.0026986023876816034,-0.15121503174304962,-0.005700418725609779,0.005392540246248245,0.07586874812841415,-0.008298727683722973,0.0019452328560873866,-0.0038438173942267895,-0.005000694654881954,-0.06706155091524124,0.04478234052658081,-0.0415729396045208,-0.040938522666692734,-0.04526748135685921,0.011904638260602951,-0.08695237338542938,0.025096770375967026,-0.04843956604599953,0.0012851598439738154,0.006824641954153776,-0.04370009899139404,-0.017623716965317726,0.0033423486165702343,-0.005994302686303854,-0.0024233777076005936,-0.017194552347064018,0.037673141807317734,0.007617662660777569,-0.015953708440065384,-0.041535619646310806,0.0035569306928664446,-0.007155844941735268,-0.007720288820564747,-0.016317564994096756,0.01407844852656126,0.00009861736180027947,0.03634833171963692,0.01746511273086071,0.01510471012443304,0.044297199696302414,0.04455842822790146,-0.013518668711185455,0.062135495245456696,-0.012688329443335533,-0.001267666812054813,-0.026608172804117203,0.02498481422662735,0.010076026432216167,-0.022409828379750252,-0.013182801194489002,-0.0301161240786314,-0.04455842822790146,-0.004902733024209738,0.01872461661696434,0.0010355915874242783,-0.020301327109336853,0.023510728031396866,-0.023454749956727028,-0.010383904911577702,0.008461995981633663,-0.027261249721050262,0.023790618404746056,-0.041125115007162094,-0.013154812157154083,0.0378410778939724,0.060082972049713135,-0.012706988491117954,0.05810508504509926,-0.016504157334566116,0.015841752290725708,0.0317394845187664,0.022391170263290405,0.02377195842564106,0.02765309438109398,-0.021308930590748787,0.042991045862436295,-0.02315620146691799,0.02134624868631363,0.011997935362160206,-0.010663794353604317,-0.04608849063515663,-0.025003472343087196,0.013667942956089973,-0.028884610161185265,0.006875955034047365,-0.05075331777334213,-0.04086388647556305,0.010383904911577702,0.0029924865812063217,0.05661234259605408,0.19077277183532715,0.047842465341091156,0.07090537250041962,-0.03353077545762062,0.04829028993844986,-0.016784047707915306,0.034090556204319,-0.010850387625396252,0.021962005645036697,-0.03618039935827255,0.017810309305787086,-0.035975147038698196,-0.021551501005887985,0.01454493124037981,-0.03815828636288643,0.04287908971309662,-0.01817416585981846,-0.045789942145347595,0.08374297618865967,0.00524326553568244,-0.007253806106746197,-0.004751126281917095,0.002619300503283739,-0.06284455209970474,-0.013416042551398277,-0.0009650361025705934,0.014778172597289085,0.017222542315721512,0.011969946324825287,-0.0002099172124871984,-0.04620044678449631,-0.0028012285474687815,0.017399804666638374,-0.008900489658117294,-0.050417449325323105,0.0023079232778400183,-0.01966691017150879,-0.04873811453580856,-0.010738431476056576,-0.0373745933175087,-0.032765746116638184,-0.050977230072021484,0.05571669340133667,-0.004277646541595459,0.03185144066810608,-0.009115071967244148,-0.027447842061519623,0.05907537043094635,0.03306429460644722,0.007720288820564747,0.010178652592003345,-0.011120948009192944,-0.03576989471912384,0.022073961794376373,0.019088473170995712,0.010029378347098827,-0.012968218885362148,-0.02185004949569702,-0.017689025029540062,0.034762293100357056,0.045640669763088226,0.005761061329394579,0.009539571590721607,0.009656191803514957,-0.004254322499036789,0.02339877188205719,-0.029407069087028503,0.021402226760983467,0.06903944164514542,0.033138930797576904,0.0012116888538002968,0.031086407601833344,-0.03306429460644722,0.008914484642446041,0.05500764027237892,0.023417431861162186,0.04287908971309662,-0.0282501932233572,0.03632967174053192,0.016541477292776108,-0.027821028605103493,-0.03431446850299835,-0.02268971875309944,-0.0028688686434179544,-0.014563590288162231,0.048588838428258896,0.02862337976694107,0.05612719804048538,0.006311511155217886,0.02147686295211315,-0.020991722121834755,0.003395994193851948,0.001876426744274795,0.02727990783751011,-0.06038152053952217,-0.04519284516572952,-0.01582309417426586,-0.01867796666920185,-0.016961311921477318,-0.007445063907653093,-0.003937114030122757,-0.03666554018855095,-0.01522599533200264,0.014488953165709972,0.015440577641129494,0.00015641748905181885,0.03795303404331207,-0.02479822002351284,-0.029220476746559143,-0.020767809823155403,-0.007356432266533375,-0.009352978318929672,-0.021962005645036697,-0.0005801878869533539,0.0023755631409585476,0.003764515509828925,-0.03586319088935852,-0.031123725697398186,0.06429997831583023,0.03819560259580612,-0.036814816296100616,-0.026402920484542847,0.0066380491480231285,0.023044245317578316,0.005775055848062038,-0.010981002822518349,-0.05049208924174309,0.03144093602895737,-0.016970640048384666,0.04519284516572952,0.047618553042411804,-0.0010355915874242783,-0.011064969934523106,0.01713857427239418,0.01583242230117321,0.017035948112607002,0.03720666095614433,-0.011951287277042866,-0.01461956836283207,-0.021551501005887985,0.02750382013618946,0.00862060021609068,-0.013761240057647228,0.0013481350615620613,0.014796831645071507,0.003668886376544833,-0.07571947574615479,-0.004480566363781691,-0.011969946324825287,-0.0046951486729085445,-0.07008436322212219,-0.01466621644794941,-0.018752604722976685,0.01141949649900198,-0.0011871984461322427,0.04011751338839531,0.008970462717115879,-0.03802767023444176,0.00812612846493721,-0.002579649444669485,-0.015365940518677235,0.02091708406805992,0.01793159544467926,0.0532536655664444,0.030228080227971077,0.034911565482616425,0.005098655819892883,-0.00525259505957365,0.018948527052998543,-0.01577644608914852,0.001082239905372262,-0.026794767007231712,-0.051201142370700836,0.050417449325323105,0.006917938590049744,0.02951902523636818,0.008191436529159546,0.027205271646380424,0.04276713356375694,0.0158697422593832,-0.00812612846493721,0.02283899299800396,0.025115428492426872,0.013472020626068115,-0.020935744047164917,0.0021773080807179213,-0.04713341221213341,-0.029873551800847054,0.0035872519947588444,-0.0033703376539051533,0.00009781559492694214,0.10434285551309586,0.03795303404331207,-0.017110586166381836,0.0728459432721138,0.007421739865094423,0.01900450512766838,0.042431268841028214,0.025936437770724297,-0.01769835315644741,0.011410167440772057,-0.06187426671385765,0.036740176379680634,-0.011382178403437138,0.029220476746559143,0.030899815261363983,-0.02063719555735588,0.008247414603829384,-0.026048393920063972,0.003349345875903964,0.012249835766851902,0.02278301492333412,0.00400475412607193,-0.017903605476021767,-0.004056067205965519,0.010617146268486977,0.05127577856183052,0.032392557710409164,-0.05146237090229988,-0.05071600154042244,-0.023286817595362663,-0.002164479810744524,0.025376658886671066,-0.06355360150337219,0.004949381574988365,0.005845028441399336,0.06068006902933121,-0.02532068081200123,0.03748654946684837,-0.007757607381790876,0.0040374076925218105,-0.03623637557029724,0.0004997195792384446,-0.0038648091722279787,-0.052134107798337936,0.027242589741945267,-0.006978581193834543,0.02414514496922493,0.02532068081200123,0.016214938834309578,0.008382693864405155,-0.026906723156571388,-0.04161025583744049,0.03981896489858627,0.00807948037981987,0.031963396817445755,-0.00994541123509407,-0.052656568586826324,-0.008154117502272129,0.05254461243748665,-0.024872858077287674,0.002467693528160453,0.06056811287999153,-0.0364229716360569,-0.0034729638136923313,-0.01727852039039135,-0.0291831586509943,0.013481350615620613,-0.04582726210355759,-0.015421918593347073,-0.014526271261274815,0.04664827138185501,-0.020525239408016205,0.03591916710138321,0.01766103506088257,-0.002693937625735998,0.0373559333384037,-0.009376302361488342,-0.029481707140803337,-0.056351110339164734,0.014414316043257713,-0.010430552996695042,-0.008648589253425598,-0.0034356452524662018,-0.021364908665418625,0.033232226967811584,-0.04534211754798889,-0.0007131354650482535,0.028007620945572853,0.042991045862436295,-0.022428488358855247,0.026421580463647842,-0.004522549919784069,0.02858605980873108,0.011335529386997223,0.03825158253312111,0.015076721087098122,0.026272306218743324,0.025843141600489616,0.0031231017783284187,0.009693510830402374,-0.03384798392653465,-0.0317394845187664,-0.0027032673824578524,0.005066002253443003,0.005387875251471996,-0.00852263905107975,-0.0017306508962064981,-0.013546657748520374,0.010122674517333508,-0.005327232647687197,0.013350735418498516,-0.020114734768867493,-0.037430573254823685,-0.0005358720081858337,0.03431446850299835,0.03302697464823723,0.018379418179392815,-0.03599380701780319,-0.004221668466925621,-0.011102288030087948,-0.04508088901638985,0.017259860411286354,0.006026956718415022,0.027447842061519623,-0.011951287277042866,-0.0029388410039246082,-0.05519423261284828,-0.0022309536579996347,-0.005112650338560343,0.013985151425004005,0.02703733742237091,-0.003582587232813239,-0.021682117134332657,0.001330641913227737,-0.026888063177466393,-0.030526628717780113,-0.008088810369372368,-0.010104015469551086,-0.0438866913318634,-0.04217003658413887,0.028847290202975273,0.02106635831296444,-0.04851420223712921,0.015114040113985538,0.019498977810144424,0.010794409550726414,-0.017549078911542892,-0.01289358176290989,-0.040975842624902725,-0.008233419619500637,-0.0142463818192482,0.02862337976694107,-0.031870096921920776,0.012520396150648594,0.010122674517333508,-0.00262163276784122,0.02162613905966282,-0.02470492385327816,0.00006410492642316967,0.011270222254097462,0.015188677236437798,0.010794409550726414,-0.015897730365395546,-0.03741191327571869,0.0142463818192482,0.0019289059564471245,0.00042304149246774614,-0.007319113705307245,-0.020469261333346367,-0.014815490692853928,0.00943694543093443,-0.014600908383727074,0.04493161290884018,0.007967524230480194,-0.0189205389469862,-0.0031720823608338833,0.04478234052658081,-0.013938503339886665,0.05608988180756569,0.012716318480670452,0.02433173730969429,-0.011074299924075603,-0.017567738890647888,-0.046722907572984695,0.011979276314377785,0.0040304106660187244,-0.022017983719706535,0.01370526198297739,0.004175020381808281,0.0004096301272511482,0.008975127711892128,-0.03496754541993141,-0.02483553998172283,0.03095579333603382,-0.0022694384679198265,-0.016737399622797966,0.00019869247626047581,-0.022335192188620567,-0.013229449279606342,-0.02367866225540638,-0.019685570150613785,-0.029295114800333977,0.022428488358855247,-0.15300633013248444,0.016149630770087242,0.0018297784263268113,0.0043266271241009235,0.01235246192663908,-0.01555253379046917,0.019275065511465073,0.015384599566459656,0.0027312561869621277,-0.021420886740088463,0.024910176172852516,-0.005005359649658203,0.016569465398788452,0.0266268327832222,-0.010542509146034718,-0.015505884774029255,0.00988010410219431,0.02302558720111847,0.012072572484612465,-0.017772991210222244,-0.011484804563224316,0.013108164072036743,-0.01577644608914852,0.022111279889941216,-0.016149630770087242,-0.02532068081200123,-0.06415069848299026,-0.017940925434231758,-0.017147904261946678,0.025432636961340904,0.05538082867860794,-0.08799729496240616,0.017726343125104904,0.021234292536973953,0.047842465341091156,0.0030717886984348297,0.00901244580745697,-0.015907060354948044,-0.018528692424297333,-0.04179685190320015,0.0009312161128036678,0.01948031783103943,-0.009152390994131565,-0.04444647207856178,0.04567798599600792,0.022671058773994446,0.007794925943017006,-0.012436429038643837,-0.01513269916176796,-0.061202529817819595,-0.027914324775338173,0.05269388481974602,-0.0009178047184832394,-0.01933104358613491,0.005261925049126148,0.013677272945642471,-0.03209400922060013,-0.020487921312451363,0.022260554134845734,0.027111975476145744,-0.006605395115911961,0.002609970746561885,0.0062695275992155075,-0.039296504110097885,0.012902911752462387,0.001559218391776085,-0.025376658886671066,-0.000041983443225035444,-0.008480655960738659,0.020189370959997177,-0.023827936500310898,-0.0158790722489357,-0.0005384959513321519,-0.01281894464045763,0.03862476721405983,-0.05575401335954666,0.04239394888281822,0.009352978318929672,-0.016877343878149986,0.01933104358613491,-0.014367667026817799,-0.01387319527566433,-0.05064136162400246,-0.03325088694691658,-0.007664310745894909,0.0023347458336502314,-0.001757473568432033,-0.007692299783229828,-0.017959583550691605,-0.04605117440223694,-0.030097464099526405,-0.03431446850299835,-0.00259131146594882,0.005970978643745184,-0.015170017257332802,-0.01895785704255104,-0.02582448348402977,-0.026272306218743324,-0.0032163981813937426,-0.01803422160446644,-0.024294419214129448,-0.05687357112765312,0.057097483426332474,-0.02185004949569702,0.025115428492426872,0.07277130335569382,0.023249497637152672,0.03278440609574318,0.021700775250792503,0.011568770743906498,0.018510034307837486,-0.004874744452536106,-0.012072572484612465,-0.01552454475313425,-0.04866347461938858,0.03114238567650318,-0.006866625510156155,0.03726263716816902,0.011951287277042866,-0.02483553998172283,-0.022391170263290405,-0.012744307518005371,0.04254322126507759,-0.043961331248283386,-0.0023160865530371666,-0.001275830203667283,0.031496912240982056,0.04612581059336662,-0.004954046569764614,-0.009777477942407131,0.034295808523893356,0.03763582557439804,-0.028921928256750107,0.021420886740088463,0.025096770375967026,0.04545407369732857,0.004977370612323284,0.026421580463647842,-0.041535619646310806,-0.004044405184686184,-0.01780097931623459,-0.006148241925984621,-0.01994680054485798,-0.022615082561969757,-0.04075193032622337,0.008354704827070236,-0.04847688227891922,0.0006729012820869684,-0.020469261333346367,-0.00610625883564353,0.0027592452242970467,-0.024928836151957512,-0.03750520944595337,-0.00866724830120802,-0.025469955056905746,-0.00989876314997673,-0.06332968920469284,-0.03030271641910076,-0.010057367384433746,-0.009264346212148666,0.03534073010087013,-0.024014528840780258,-0.024387715384364128,0.02349206991493702,-0.005555809009820223,0.01218452863395214,-0.026682810857892036,-0.007972189225256443,-0.012557714246213436,-0.027018679305911064,-0.012706988491117954,0.03664688020944595,-0.02811957709491253,0.01733449660241604,0.012501736171543598,-0.04687218368053436,0.0024863528087735176,0.003874138928949833,0.0021306597627699375,0.004611181560903788,0.013164142146706581,0.043924011290073395,0.015907060354948044,0.0025936439633369446,-0.0029761595651507378,0.013574646785855293,0.02138356678187847,-0.034090556204319,-0.0256938673555851,0.0200774148106575,-0.02983623370528221,-0.01989082247018814,-0.011512793600559235,0.008979791775345802,0.023230839520692825,0.052469976246356964,0.005285249091684818,0.0022845990024507046,-0.01961093209683895,0.030657242983579636,-0.03153423219919205,0.007389086298644543,-0.06795720010995865,-0.02483553998172283,-0.006484109442681074,0.009413621388375759,-0.04254322126507759,-0.023734640330076218,0.011335529386997223,-0.025283362716436386,0.05482104793190956,0.0013609633315354586,-0.03209400922060013,0.014190403744578362,-0.010430552996695042,0.00044432477443479,-0.009693510830402374,-0.049297891557216644,0.009068423882126808,0.01377989910542965,0.0142463818192482,0.04235662892460823,-0.05064136162400246,-0.017726343125104904,-0.016588125377893448,-0.010757091455161572,-0.03812096640467644,0.021794071421027184,0.0019242411945015192,-0.010271948762238026,0.030433332547545433,0.0012781625846400857,-0.011410167440772057,0.013098834082484245,0.033456139266490936,-0.02998550795018673,0.024201123043894768,-0.010159993544220924,0.010850387625396252,0.016728069633245468,-0.017530420795083046,0.008699902333319187,0.018668638542294502,-0.030843837186694145],"index":0,"object":"embedding"}],"model":"text-embedding-v3","object":"list","usage":{"prompt_tokens":3,"total_tokens":3},"id":"593398f2-c64d-97be-80cf-f8246b1c0456"}1

4. 向量数据库

4.1. 什么是向量数据库?

向量数据库,是专门为向量检索设计的中间件!

高效存储、快速检索和管理高纬度向量数据的系统称为向量数据库

一种专门用于存储和检索高维向量数据的数据库。它将数据(如文本、图像、音频等)通过嵌入模型转换为向量形式,并通过高效的索引和搜索算法实现快速检索。

向量数据库的核心作用是实现相似性搜索,即通过计算向量之间的距离(如欧几里得距离、余弦相似度等)来找到与目标向量最相似的其他向量。它特别适合处理非结构化数据,支持语义搜索、内容推荐等场景。

核心功能:

向量存储

相似性度量

相似性搜索

4.2. 如何存储和检索嵌入向量?

存储:向量数据库将嵌入向量存储为高维空间中的点,并为每个向量分配唯一标识符(ID),同时支持存储元数据。

检索:通过近似最近邻(ANN)算法(如PQ等)对向量进行索引和快速搜索。比如,FAISS和Milvus等数据库通过高效的索引结构加速检索。

4.3 向量数据库与传统数据库对比

- 数据类型

- 传统数据库:存储结构化数据(如表格、行、列)。

- 向量数据库:存储高维向量数据,适合非结构化数据。

- 查询方式

- 传统数据库:依赖精确匹配(如=、<、>)。

- 向量数据库:基于相似度或距离度量(如欧几里得距离、余弦相似度)。

- 应用场景

- 传统数据库:适合事务记录和结构化信息管理。

- 向量数据库:适合语义搜索、内容推荐等需要相似性计算的场景。

澄清几个关键概念:

- 向量数据库的意义是快速的检索;

- 向量数据库本身不生成向量,向量是由 Embedding 模型产生的;

- 向量数据库与传统的关系型数据库是互补的,不是替代关系,在实际应用中根据实际需求经常同时使用。

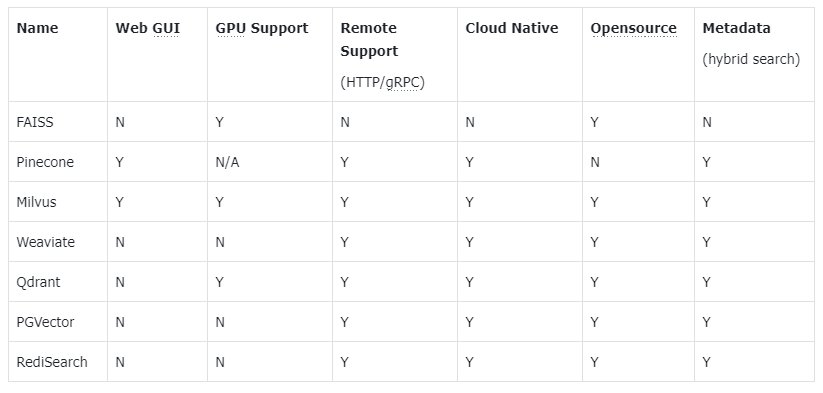

4.4. 主流向量数据库功能对比

- FAISS: Meta 开源的向量检索引擎 https://github.com/facebookresearch/faiss

- Pinecone: 商用向量数据库,只有云服务 https://www.pinecone.io/

- Milvus: 开源向量数据库,同时有云服务 https://milvus.io/

- Weaviate: 开源向量数据库,同时有云服务 https://weaviate.io/

- Qdrant: 开源向量数据库,同时有云服务 https://qdrant.tech/

- PGVector: Postgres 的开源向量检索引擎 https://github.com/pgvector/pgvector

- RediSearch: Redis 的开源向量检索引擎 https://github.com/RediSearch/RediSearch

- ElasticSearch 也支持向量检索 https://www.elastic.co/enterprise-search/vector-search

扩展阅读:https://guangzhengli.com/blog/zh/vector-database

4.5. Chroma 向量数据库

官方文档:https://docs.trychroma.com/docs/overview/introduction

1. 什么是 Chroma?

Chroma 是一款开源的向量数据库,专为高效存储和检索高维向量数据设计。其核心能力在于语义相似性搜索,支持文本、图像等嵌入向量的快速匹配,广泛应用于大模型上下文增强(RAG)、推荐系统、多模态检索等场景。与传统数据库不同,Chroma 基于向量距离(如余弦相似度、欧氏距离)衡量数据关联性,而非关键词匹配。

2. 核心优势

- 轻量易用:以 Python/JS 包形式嵌入代码,无需独立部署,适合快速原型开发。

- 灵活集成:支持自定义嵌入模型(如 OpenAI、HuggingFace),兼容 LangChain 等框架。

- 高性能检索:采用 HNSW 算法优化索引,支持百万级向量毫秒级响应。

- 多模式存储:内存模式用于开发调试,持久化模式支持生产环境数据落地。

4.6. Chroma 安装与基础配置

1. 安装

通过 Python 包管理器安装 ChromaDB:

python

# 安装 Chroma 向量数据库完成功能

# !pip install chromadb1

2

2

2. 初始化客户端

- 内存模式(一般不建议使用):

python

import chromadb

client = chromadb.Client()1

2

3

2

3

- 持久化模式:

python

# 数据保存至本地目录

client = chromadb.PersistentClient(path="/path/to/save")1

2

2

4.7. Chroma 核心操作流程

1. 集合(Collection)

集合是 Chroma 中管理数据的基本单元,类似关系数据库的表:

(1)创建集合

python

from chromadb.utils import embedding_functions

# 默认情况下,Chroma 使用 DefaultEmbeddingFunction,它是基于 Sentence Transformers 的 MiniLM-L6-v2 模型

default_ef = embedding_functions.DefaultEmbeddingFunction()

# 使用 OpenAI 的嵌入模型,默认使用 text-embedding-ada-002 模型

# openai_ef = embedding_functions.OpenAIEmbeddingFunction(

# api_key="YOUR_API_KEY",

# model_name="text-embedding-3-small"

# )

collection = client.create_collection(

name = "my_collection",

configuration = {

# HNSW 索引算法,基于图的近似最近邻搜索算法(Approximate Nearest Neighbor,ANN)

"hnsw": {

"space": "cosine", # 指定余弦相似度计算

"ef_search": 100,

"ef_construction": 100,

"max_neighbors": 16,

"num_threads": 4

},

# 指定向量模型

"embedding_function": default_ef

}

)1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

(2)查询集合

python

collection = client.get_collection(name="my_collection")1

- peek() - returns a list of the first 10 items in the collection.

- count() - returns the number of items in the collection.

- modify() - rename the collection

python

print(collection.peek())

print(collection.count())

# print(collection.modify(name="new_name"))1

2

3

4

5

2

3

4

5

python

{'ids': ['id1', 'id2', 'id3'], 'embeddings': array([[ 0.00291795, 0.10026005, 0.02475987, ..., -0.00089897,

0.03750684, 0.00060963],

[-0.04344053, 0.0862214 , 0.0596776 , ..., 0.07138078,

0.07886603, 0.0202222 ],

[-0.02276114, 0.07069635, -0.05875918, ..., 0.05860774,

0.01676111, -0.02023437]], shape=(3, 384)), 'documents': ['RAG是一种检索增强生成技术', '向量数据库存储文档的嵌入表示', '在机器学习领域,智能体(Agent)通常指能够感知环境、做出决策并采取行动以实现特定目标的实体'], 'uris': None, 'included': ['metadatas', 'documents', 'embeddings'], 'data': None, 'metadatas': [{'source': 'RAG'}, {'source': '向量数据库'}, {'source': 'Agent'}]}

31

2

3

4

5

6

7

8

2

3

4

5

6

7

8

(3)删除集合

python

client.delete_collection(name="my_collection")1

2. 添加数据

支持自动生成或手动指定嵌入向量:

python

# 方式1:自动生成向量(使用集合指定的嵌入模型)

collection.add(

documents = ["RAG是一种检索增强生成技术", "向量数据库存储文档的嵌入表示", "在机器学习领域,智能体(Agent)通常指能够感知环境、做出决策并采取行动以实现特定目标的实体"],

metadatas = [{"source": "RAG"}, {"source": "向量数据库"}, {"source": "Agent"}],

ids = ["id1", "id2", "id3"]

)

# 方式2:手动传入预计算向量

# collection.add(

# embeddings = [[0.1, 0.2, ...], [0.3, 0.4, ...]],

# documents = ["文本1", "文本2"],

# ids = ["id3", "id4"]

# )1

2

3

4

5

6

7

8

9

10

11

12

13

2

3

4

5

6

7

8

9

10

11

12

13

3. 查询数据

- 文本查询(自动向量化):

python

results = collection.query(

query_texts = ["RAG是什么?"],

n_results = 3,

# where = {"source": "RAG"}, # 按元数据过滤

# where_document = {"$contains": "检索增强生成"} # 按文档内容过滤

)

print(results)1

2

3

4

5

6

7

8

2

3

4

5

6

7

8

执行结果

python

{'ids': [['id1', 'id2']], 'embeddings': None, 'documents': [['RAG是一种检索增强生成技术,在智能客服系统中大量使用', '向量数据库存储文档的嵌入表示']], 'uris': None, 'included': ['metadatas', 'documents', 'distances'], 'data': None, 'metadatas': [[{'source': 'RAG'}, {'source': '向量数据库'}]], 'distances': [[0.34913837909698486, 0.5758516788482666]]}1

- 向量查询(自定义输入):

python

results = collection.query(

query_embeddings = [[0.5, 0.6, ...]],

n_results = 3

)1

2

3

4

2

3

4

4. 数据管理

更新集合中的数据:

python

collection.update(ids=["id1"], documents=["RAG是一种检索增强生成技术,在智能客服系统中大量使用"])1

删除集合中的数据:

python

collection.delete(ids=["id3"])1

4.8. Chroma Client-Server Mode

- Server 端

sh

chroma run --path /db_path1

- Client 端

python

import chromadb

chroma_client = chromadb.HttpClient(host='localhost', port=8000)1

2

3

2

3

5. Milvus 扩展学习

html

<p style="text-align:center">Milvus 架构图<p>

<img src="/llm/Milvus.png" width="1200px" />1

2

3

2

3

6. 学习打卡

- 掌握 Embedding 模型的原理及选型

- 安装 Chroma 向量数据库

- 使用 Chroma 本地持久化完成其核心操作

- 使用 Chroma Client-Server 模式完成其核心操作

- 掌握 Mivus 向量数据库的使用 -->